TL;DR: 84% of developers now use or plan to use AI coding tools (Stack Overflow, 2025). I built a working AI Visibility Audit tool using Claude, ChatGPT, and Gemini without writing a single line of code. The hardest part was not the AI. It was knowing what to build and holding the vision when the AI kept losing the thread.

Why I Built an AI Tool Without Writing Code

Over 30% of new code at Google is now AI-generated, up from 25% six months earlier (Fortune, 2025). Andrej Karpathy called this shift “vibe coding,” a term that Collins Dictionary named Word of the Year for 2025. I wanted to test whether vibe coding could produce something real, not a demo or a toy, but a tool that delivers value to actual users.

I am not a developer. I can read code, talk to developers, and hold my own in a product meeting, but I have never shipped production code. Could someone with domain expertise and zero coding ability build functional software using AI?

The tool I had in mind was an AI Visibility Audit for 2Stallions, the digital marketing agency I run. Brands are losing traffic as AI engines replace traditional search results, and most have no idea how they appear in ChatGPT, Perplexity, or Google’s AI Overviews. I wanted to build something, without code, that would show them.

What Does the AI Visibility Audit Actually Do?

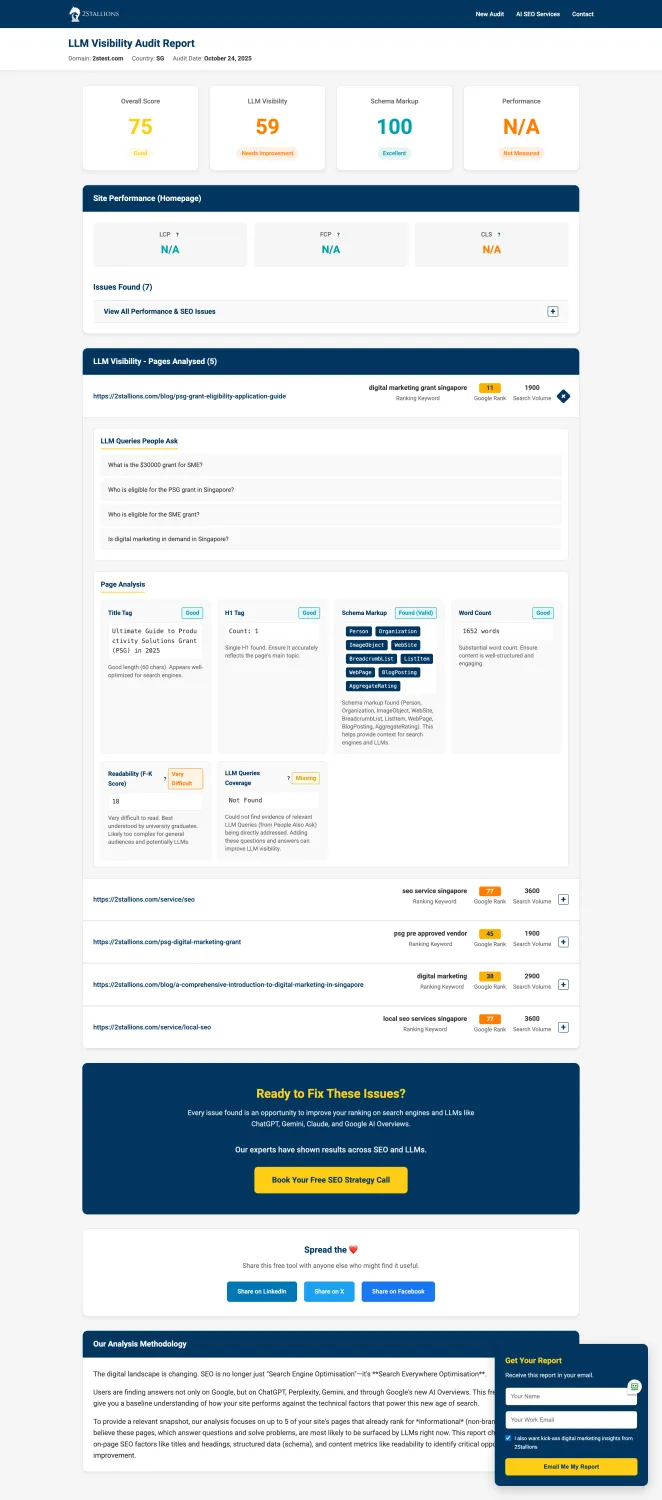

Gartner projects that 75% of new applications will use low-code development by 2026, up from 40% in 2021 (InfoWorld). This tool is one of them. You enter a URL and a target country, and within 60 seconds it identifies up to five pages on your site based on the informational keywords with the highest search volume that each page ranks for.

For each page, the audit checks three things:

- A PageSpeed performance test to flag load time issues

- A schema markup analysis, with specific attention to FAQ schema

- Whether your pages answer any People Also Ask questions that Google surfaces for your target keywords

The output is a single report with scores, identified issues, and specific recommendations. No email required to run it, though leaving one gives you access to your results for seven days.

How Did Three AI Platforms Build One Tool?

GitHub Copilot added 5 million users in just three months in mid-2025, hitting 20 million total (TechCrunch). The AI coding market is growing fast. But using these tools for a full build from scratch, without writing code yourself, is a very different experience from autocompleting a line inside an IDE.

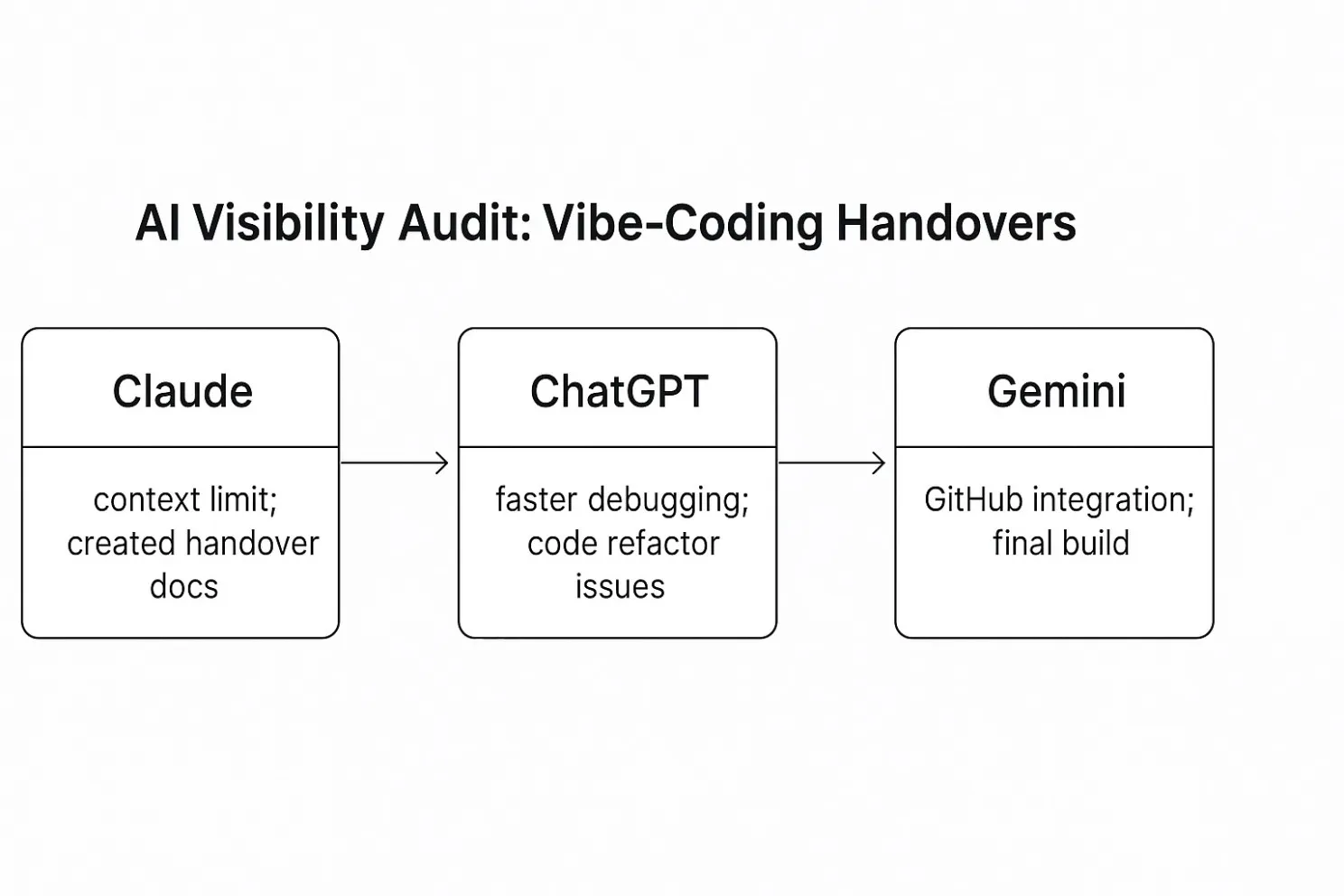

I started with Anthropic’s Claude. The interface was clean, testing was straightforward, and Claude was the best thinking partner for working through architecture decisions. It asked clarifying questions and pushed back when requirements were vague.

Halfway through, I hit the context window limit. Claude told me to wait five hours before continuing, which was my introduction to one of the real constraints of AI-assisted building.

So I asked Claude to generate a handover document summarising every decision, every code file, and the current state of the project. Then I moved to ChatGPT.

ChatGPT was faster at debugging and deployment guidance, producing code blocks I could test immediately with less hedging. But it had a different problem: it kept renaming variables across files.

Code that worked in one file would break because ChatGPT decided auditScore should become score in a related file. I spent hours tracing errors that the AI itself had introduced.

After creating another handover document, I switched to Google’s Gemini. Gemini connected directly to GitHub and offered a much larger context window, so it could see the whole project at once. The deployed version came together in Gemini.

Here is how each platform compared:

| Platform | Best for | Limitation | Role in my build |

|---|---|---|---|

| Claude | Architecture, spec writing, clarifying questions | Hit context window limit mid-build | Initial spec and code structure |

| ChatGPT | Rapid code generation, debugging | Renamed variables across files, breaking working code | Core development and debugging |

| Gemini | GitHub integration, large context window | Less opinionated on architecture decisions | Final integration and deployment |

What Happens When You Let AI Design the Whole Thing?

Only 29% of developers trust AI tool accuracy, down from 40% the year before (Stack Overflow, 2025). After this build, I understand why that number keeps dropping.

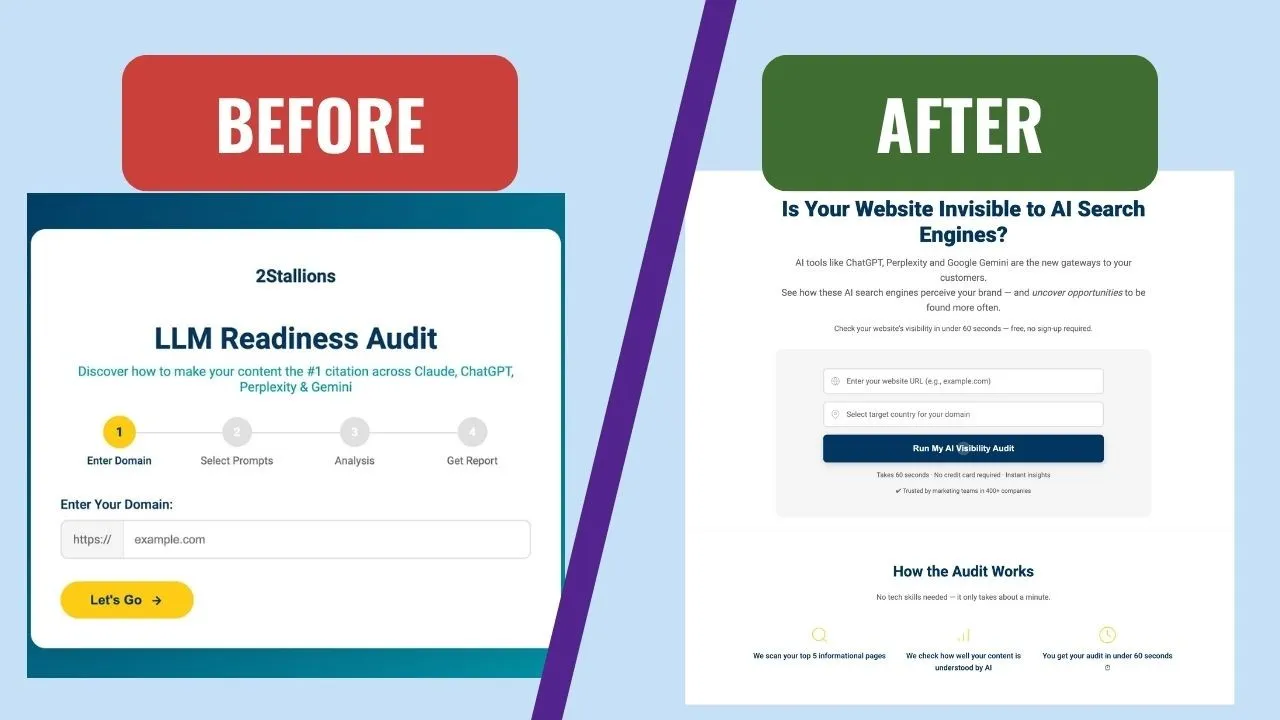

My biggest mistake happened at the very start. I told Claude what I wanted the tool to do, then asked it to figure out how to build it: the architecture, the tech stack, the user flow, the data model. I treated AI as the product manager, not just the builder.

The first version looked polished, with a clean UI and logical flow on paper. But when I started using it, the tool was too complicated: too many steps, too many inputs, too many screens. I didn’t want to use it myself, which is always a clear sign your users won’t either.

That was the real lesson from this build. AI needs a human who has mapped out the vision, not just described the idea. The difference sounds small but it is enormous in practice.

“Build me a tool that audits AI visibility” is an idea. “Take a URL and country, find the top 5 informational pages by search volume, run PageSpeed, check for FAQ schema, and match against PAA questions, then display a single-page report with scores” is a vision. AI can execute the second. It will wander endlessly with the first.

Where Does AI as Co-Pilot Actually Help?

A CodeRabbit study of 470 open-source pull requests found that AI-co-authored code contains 1.7x more issues than human-written code (2025). Security vulnerabilities were 2.74x higher. The code works, mostly, but “mostly” is doing a lot of heavy lifting when you are shipping a tool for real users.

Think of it as managing a team of brilliant but inconsistent contributors. Each one produces in minutes what would take a junior developer days, and they occasionally surprise you with insights you hadn’t considered.

But they also hallucinate and forget what you told them three prompts ago. They confidently produce code that looks correct but fails on edge cases.

As I learned with ChatGPT’s variable renaming habit, they sometimes “improve” working code in ways that break it. 66% of developers report frustration with AI solutions that are “almost right, but not quite” (Stack Overflow, 2025), which matches my experience exactly.

Where AI genuinely excels as a co-pilot: spec development and architecture brainstorming (Claude), rapid code generation and debugging (ChatGPT), and integration across a full codebase with GitHub connectivity (Gemini). Treating them as interchangeable is a mistake.

What Should Non-Technical Operators Know Before Vibe-Coding?

77% of professional developers say vibe coding is not part of their work (Stack Overflow, 2025). For non-developers trying to build without code, the barrier is even higher. Here is what I learned from being on the other side of that stat.

Prompting is project management. The quality of your output tracks directly with the quality of your instructions. Specific prompts with clear constraints and expected formats produce useful results, while vague prompts produce vague outputs. If you can write a good project brief, you can prompt AI effectively.

I could build this tool because I understood SEO, AI visibility, and what a useful audit looks like. Every decision about what to build, what data to analyse, and what recommendations to surface came from years of running an agency. A developer without that marketing knowledge could not have built this tool, even with the same AI platforms. AI amplifies domain expertise. It does not replace it.

Verification took as much time as generation. Every output needed testing, not spot-checking, because CodeRabbit’s research on 470 pull requests confirms that AI-written code ships with significantly more issues than human-written code.

Keep a running document outside the AI. Context windows are large now, with Claude, GPT-4.1, and Gemini all offering around 1 million tokens, but complex builds will still bump against those limits. When you do, the AI forgets earlier decisions and contradicts itself. Your external spec document becomes the AI’s memory.

Is the Tool Worth Using?

80% of new developers on GitHub now use Copilot within their first week (GitHub Blog, 2026). AI-assisted building is becoming the default starting point, even for people who are not developers by training.

The AI Visibility Audit is live at ai-audit.2stallions.com and free to run with no sign-up required. 2Stallions uses it as a lead magnet, and conversions from the page have been strong.

The tool does exactly what I wanted: shows a brand how their content appears (or doesn’t appear) in AI-driven search, identifies specific issues, and gives them a reason to talk to us about fixing those problems. It runs on a LAMP stack so our development team can maintain and extend it without depending on me or any AI platform.

Could a professional developer have built this faster and better? Absolutely. Would we have allocated developer time to an experimental lead magnet tool? Probably not.

That’s the real value of vibe-coding for operators. It doesn’t replace professional development. It unlocks projects that would never have made it to the priority queue.

For more on how AI is reshaping business, see AI for Business. Related reads: AI Fluency for Board Directors and The AI Commerce War.

Frequently Asked Questions

Can you build software with AI and no coding experience?

Yes, with significant caveats. I built a working AI audit tool using Claude, ChatGPT, and Gemini without writing code. The key requirement is domain expertise: you need to know what the tool should do, how data should flow, and what the output should look like. AI handles implementation, not the thinking.

Which AI platform is best for vibe-coding?

No single platform is best for a full build. Claude excels at architecture and specification, ChatGPT is faster for code generation and debugging, and Gemini integrates well with GitHub for codebase-wide tasks. Using multiple platforms sequentially with handover documents produced better results than forcing one to do everything.

How long does it take to build an AI tool without coding?

Longer than the marketing suggests. My build took several weeks, including a scrapped first version. The AI generation is fast, but debugging, verifying outputs, managing context window limits, and iterating on product design took most of the time. Expect to spend as much time testing as generating.

What is vibe coding?

A term coined by Andrej Karpathy in February 2025 to describe software development where you describe what you want in natural language and let AI generate the code. Collins Dictionary named it Word of the Year for 2025. 84% of developers now use or plan to use AI coding tools (Stack Overflow, 2025).

Is AI-generated code reliable?

Not without human review. A CodeRabbit study of 470 pull requests found AI-co-authored code has 1.7x more issues and up to 2.74x more security vulnerabilities than human-written code (2025). Only 29% of developers trust AI tool accuracy. Every output needs thorough testing.

Do you need to know a programming language to vibe-code?

Not necessarily, but understanding basic concepts like variables, APIs, and data flow helps. I don't write code, but I can read it well enough to spot when something looks wrong. The more technical context you bring, the better you can verify AI outputs and catch errors before they compound.