The AI Fluency Gap in the Boardroom

Two out of three board directors report “limited to no knowledge” of AI, and nearly one in three say AI does not even appear on their agendas (McKinsey, Dec 2025). A decade ago, sitting on a board without understanding financial statements made you a liability. AI fluency has reached that same threshold.

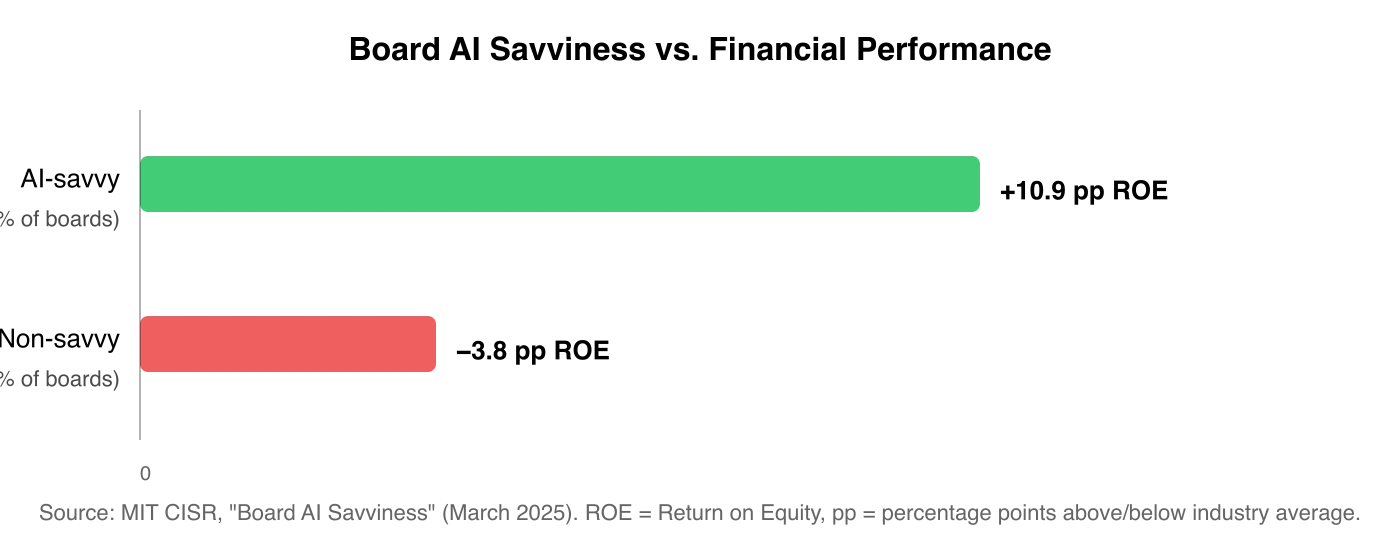

TL;DR: Only 26% of boards are AI-savvy, yet those boards outperform their industry peers by 10.9 percentage points on ROE (MIT CISR, Mar 2025). Directors don’t need to code, but they need to understand four categories of AI: generative AI, AI agents, operational automation, and AI governance. This article breaks down what each means for board-level decisions.

I talk with founders and directors across Southeast Asia regularly, and the AI fluency gap is consistent. Most board members can discuss AI in broad terms, maybe even approve an AI budget. But ask them to explain the difference between a generative AI tool and an autonomous AI agent, and the conversation stalls.

Last year, I sat in an advisory session with a board in Singapore where a director kept referring to their customer support chatbot as “our AI agent.” It wasn’t. It was a simple rule-based flow with a GPT wrapper, and the confusion mattered because they were budgeting for a full agentic deployment at three times the cost.

That gap is not unusual. Worldwide AI spending hits $2.52 trillion in 2026, up 44% year-over-year (Gartner, Jan 2026). Boards that cannot tell one AI category from another are approving those budgets blind.

Does Board AI Fluency Actually Affect Financial Performance?

Only 26% of boards qualify as digitally and AI savvy, according to MIT CISR research. Those boards average 10.9 percentage points higher return on equity (ROE) than their industry peers. Boards lacking that savviness fall 3.8 percentage points below average (MIT CISR, Mar 2025).

A 14.7 percentage point spread in ROE. Correlation does not prove causation, but it tracks with straightforward logic: boards that understand the technology make better capital allocation decisions around it.

What Does AI Fluency Actually Mean for Directors?

AI fluency does not mean writing Python scripts or fine-tuning models. It means understanding what each AI category does, where it creates value, and what can go wrong. That baseline changes the quality of every AI-related conversation in the boardroom.

I have spent 14 years building businesses, from running a 40+ person digital agency to launching an AI-powered DOOH ad network in Dhaka to reselling AI tools like BrickandMortar.AI for restaurant operations. From that experience, I break AI fluency into four categories that directors encounter most often.

The old framework of “LLMs vs. RPA vs. GenAI” is too narrow for 2026. AI agents have changed the picture entirely.

How Should Directors Think About Generative AI?

Generative AI tools like ChatGPT, Claude, Midjourney, and GitHub Copilot create text, images, video, and code. 60% of workers now use sanctioned AI tools, up from under 40% a year earlier (Deloitte, 2026). For most companies, this is no longer experimental.

The value is real. Generative AI can compress hours of briefing material into summaries, draft initial contract language, and produce campaign assets at a fraction of traditional cost.

Directors need to understand the core risk: hallucination. Even the best large language models still hallucinate at a minimum rate of 1.8%, with the worst exceeding 24% (Vectara Hallucination Leaderboard, Feb 2026). This is not a bug that disappears with the next update. It is built into how these systems work.

IP exposure is the other board-level concern. AI copyright lawsuits doubled in 2025, from roughly 30 to over 70 active cases (Copyright Alliance, 2025). If your marketing team generates campaign imagery with AI, who owns it? The answer varies by jurisdiction and is still evolving.

The AI fluency question directors should ask: “Where in our workflow is it acceptable for outputs to be occasionally wrong, and where is it not?”

Why Are AI Agents the Category Boards Must Learn Now?

AI agents are the newest and most consequential category for board oversight. Unlike generative AI, which produces content when prompted, agents take actions on their own: chaining tasks, making decisions, executing workflows without a human in the loop. Gartner projects 40% of enterprise apps will embed task-specific AI agents by 2026, up from under 5% in 2025 (Gartner, Aug 2025).

The governance challenge differs from generative AI. When a chatbot hallucinates, a human can catch the error before it reaches a customer. When an agent processes a refund or adjusts pricing based on market data, the action is already taken.

I read about a case in the e-commerce space last year. They deployed an agent to automate supplier communications and purchase order adjustments. Within the first week, the agent renegotiated a delivery timeline based on inventory data that was 48 hours stale.

The supplier shipped early, the warehouse wasn’t ready, and the company absorbed $12,000 in demurrage fees. The agent did exactly what it was designed to do. Nobody on the board had asked what happens when the data feeding the agent is wrong.

75% of companies plan agentic AI deployment within two years, but only 21% have governance mature enough to handle it (Deloitte, 2026). Singapore recognised this gap early. IMDA launched the world’s first Model AI Governance Framework for Agentic AI in January 2026 (IMDA Singapore, Jan 2026).

Is Operational Automation Still Worth Board Attention?

Operational automation (RPA, workflow tools, process mining) is the least glamorous AI category and arguably the most reliable. It automates repetitive, rule-based tasks: data entry, invoice processing, report generation, system-to-system data transfers. Predictable outputs, calculable returns.

Where boards go wrong is ignoring this category in favour of flashier investments. I have seen companies pour resources into generative AI pilots while their back-office teams still manually copy data between spreadsheets. Unsexy automation often delivers the fastest, most measurable ROI.

There is a pattern I notice across the startups I advise: founders chase generative AI headlines while their operations teams drown in manual work. When I ask “Have you automated invoice reconciliation yet?” the answer is usually no. What surprises founders is that building useful AI tools no longer requires a developer — domain expertise plus the right prompt structure is often enough to produce something real.

A $200/month RPA tool would save 15 hours per week, but it is not exciting enough to make the board deck. Directors should ask whether these low-risk, high-return opportunities have been captured before funding experimental work.

Operational automation sits in a comfortable governance zone. The system follows explicit rules and outputs are predictable. Audit and documentation are the primary needs: ensuring rules are correct, documented, and updated when processes change.

How Should Boards Match Governance to Each AI Category?

Only 7% of companies have fully embedded AI governance despite 93% using AI in some form (Trustmarque, 2025). Understanding the four categories is step one. Step two is building governance that matches each risk profile.

AI fluency at the governance level means treating each category differently:

For generative AI, establish verification protocols. Any output that informs a business decision or reaches a customer should pass through human review. Define approved use cases. Revisit them quarterly.

For AI agents, set boundary controls and kill switches. Agents need clear limits on what they can authorise, spend, and change. Build mandatory human-in-the-loop checkpoints for high-stakes actions. Singapore’s IMDA framework maps this into five consequence tiers with a named accountable person at each — the Singapore board guide to AI agent accountability has a practical classification template boards can adopt directly.

For operational automation, focus on audit and documentation. Governance here ensures rules are correct and updated when processes change. Lower risk, but not zero.

AI governance itself is not a technology category. It is a standing board function, the same way financial oversight is. 62% of directors now set aside agenda time for AI discussions, but fewer than 25% have board-approved AI policies (NACD, 2025). Discussion without policy is decisions without structure.

What About Regulation? The EU AI Act and Singapore’s Framework

The regulatory environment is moving faster than most boards realise. The EU AI Act, Article 4, mandates AI literacy for all personnel operating AI systems, with enforcement beginning August 2026. Fines reach up to EUR 35 million or 7% of global annual turnover (European Commission, 2025).

Only 39% of Fortune 100 companies disclose any form of board AI oversight (McKinsey, Dec 2025). If you operate in or sell into the EU, this is not abstract policy. Your board needs to verify that AI fluency training is in place before enforcement begins.

Singapore’s IMDA launched the world’s first governance framework specifically for agentic AI in January 2026 (IMDA Singapore, Jan 2026). For boards with operations in Southeast Asia, this is the template to watch. The way AI is reshaping strategy applies to governance just as much as it applies to marketing.

Where Should a Director Start Building AI Fluency?

If you sit on a board and are unsure where to begin, bring these three questions to your next meeting.

Where are we actually using AI right now? Not where the strategy deck says, but where employees are actually using it, including unsanctioned tools. 60% of workers now have sanctioned AI tools (Deloitte, 2026). The rest may be using unsanctioned ones.

For each AI deployment, what is the failure mode? If the generative AI hallucinates, what happens? If the agent acts on stale data, what is the fallback? Understanding failure modes is the foundation of AI fluency at the board level.

Do we have the right expertise to govern this? AI governance requires people who understand both the technology and the business context. If your board lacks that combination, consider an advisory role or a dedicated AI committee. I speak on this topic regularly with boards and executive teams across Asia.

Frequently Asked Questions

What AI skills do board directors need?

Board directors need AI fluency across four categories: generative AI, AI agents, operational automation, and AI governance. They do not need to code or build models. They need to evaluate risk profiles, ask the right questions about failure modes, and ensure governance structures match the technology being deployed. Only 26% of boards currently qualify as AI-savvy (MIT CISR, 2025).

How should boards govern AI in their organisations?

Match governance to risk. Generative AI needs human verification protocols. AI agents need boundary controls and kill switches for high-stakes actions. Operational automation needs audit trails. Treat AI governance as a standing board function, not a one-time agenda item. Only 7% of companies have fully embedded AI governance despite 93% using AI (Trustmarque, 2025).

What is the EU AI Act's impact on board directors?

Article 4 of the EU AI Act mandates AI literacy for all personnel operating AI systems, with enforcement starting August 2026. Fines can reach EUR 35 million or 7% of global annual turnover. Boards with EU operations need to verify that AI fluency programmes are in place and that governance disclosures meet the new requirements before enforcement begins.

What are AI agents and why should boards care?

AI agents are systems that take actions on their own, chaining tasks and making decisions without human intervention. Unlike generative AI, where a human reviews the output, agents act first. Gartner projects 40% of enterprise apps will embed AI agents by 2026. Boards need boundary controls, kill switches, and mandatory human checkpoints for high-stakes agent actions.

How do AI-savvy boards outperform financially?

MIT CISR research from March 2025 found that AI-savvy boards average 10.9 percentage points higher return on equity than industry peers. Non-savvy boards trail by 3.8 percentage points. The 14.7 percentage point spread suggests that boards with AI fluency make better capital allocation and risk decisions around AI investments.

I work with founders and boards on AI governance, growth strategy, and scaling operations across Asia. If your board is working through AI fluency, let’s talk.

More on AI strategy and governance: AI for Business | Leadership & Governance | For a practical example of what AI agents actually do day-to-day, see how I built a private AI assistant that runs my morning briefings. For the full 2026 map of enterprise SaaS, open-source, and framework options, see AI Agents for Business 2026.